A Framework for Content-Adaptive Photo Manipulation Macros: Application to Face, Landscape, and Global Manipulations

Floraine Berthouzoz, Wilmot Li, Mira Dontcheva, Maneesh Agrawala

Abstract

We present a framework for generating content-adaptive macros that can transfer complex photo manipulations to new target images. We demonstrate applications of our framework to face, landscape and global manipulations. To create a content-adaptive macro, we make use of multiple training demonstrations. Specifically, we use automated image labeling and machine learning techniques to learn the dependencies between image features and the parameters of each selection, brush stroke and image processing operation in the macro. Although our approach is limited to learning manipulations where there is a direct dependency between image features and operation parameters, we show that our framework is able to learn a large class of the most commonly-used manipulations using as few as 20 training demonstrations. Our framework also provides interactive controls to help macro authors and users generate training demonstrations and correct errors due to incorrect labeling or poor parameter estimation. We ask viewers to compare images generated using our content-adaptive macros with and without corrections to manually generated ground-truth images and find that they consistently rate both our automatic and corrected results as close in appearance to the ground-truth.We also evaluate the utility of our proposed macro generation workflow via a small informal lab study with professional photographers. The study suggests that our workflow is effective and practical in the context of real-world photo editing.

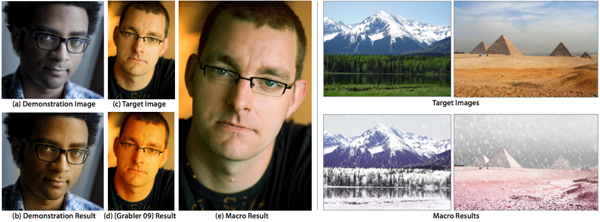

(Left) A user demonstrates a skin-tone correction manipulation on a photograph of a dark-skinned person with a blue cast (a-b). Applying Grabler et al. [2009] and directly copying the same color adjustment parameters to correct the skin-tone of a light-skinned person turns his skin orange (c-d). Given 20 demonstrations of the manipulation, our content-adaptive macro learns the dependency between skin color, image color cast and the color adjustment parameters to successfully transfer the manipulation (e). (Right) After demonstrating a snow manipulation on 20 landscape images, our content-adaptive macro transfers the effect to several target images. Image credits: (a) Ritwik Dey, (c) Dave Heuts, right: Ian Murphy, Tommy Wong.

Research Paper

Video

A Framework for Content-Adaptive Photo Manipulation Macros: Application to Face, Landscape and Global Manipulations from Floraine Berthouzoz on Vimeo.

More Results

Supplemental Materials

Supplemental PDF (7.6M)