3D Puppetry: A Kinect-based Interface for 3D Animation

Robert T. Held, Ankit Gupta, Brian Curless, Maneesh Agrawala

Abstract

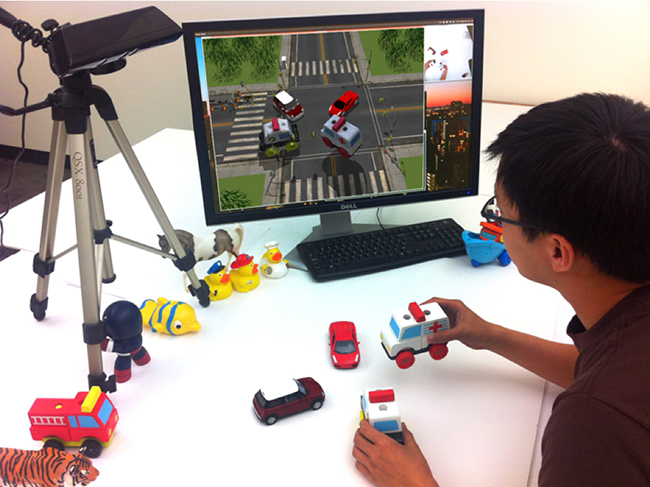

We present a system for producing 3D animations using physical objects (i.e., puppets) as input. Puppeteers can load 3D models of familiar rigid objects, including toys, into our system and use them as puppets for an animation. During a performance, the puppeteer physically manipulates these puppets in front of a Kinect depth sensor. Our system uses a combination of image-feature matching and 3D shape matching to identify and track the physical puppets. It then renders the corresponding 3D models into a virtual set. Our system operates in real time so that the puppeteer can immediately see the resulting animation and make adjustments on the fly. It also provides 6D virtual camera and lighting controls, which the puppeteer can adjust before, during, or after a performance. Finally our system supports layered animations to help pup- peteers produce animations in which several characters move at the same time. We demonstrate the accessibility of our system with a variety of animations created by puppeteers with no prior animation experience.

Our system allows puppeteers to use toys and other physical props to directly perform 3D animations.

Research Paper

PDF (8.8M) | Hi Res PDF (58.8M)

Additional Materials

Video

MP4 (43.5M) | Hi Quality MOV (340M) | YouTube

Animation Gallery

Stop, Look, and Listen MP4 (7.3M) | YouTube

The Bully Meets His Match MP4 (11.4M) | YouTube

Mean Minnow MP4 (2.3M) | YouTube

Clear the Crash (10.4M) | YouTube

Cheatin' Duck MP4 (4.4M) | YouTube

Sea Dance MP4 (6.4M) | YouTube

Penguin Mugger MP4 (2.7M) | YouTube